Releases: Opencode-DCP/opencode-dynamic-context-pruning

Releases · Opencode-DCP/opencode-dynamic-context-pruning

v3.0.3 - Fix compress subagent refs and metadata tag leaking

What's Changed

- Fix compress tool to rebuild message refs when operating in subagent context

- Add instruction to prevent model from outputting injected XML metadata tags

- Add compress subagent integration test

Full Changelog: v3.0.2...v3.0.3

v3.0.2 - Fix host permissions compatibility

What's Changed

- Replace

findLastwith compatible alternative in host permissions for broader runtime support

Full Changelog: v3.0.1...v3.0.2

v3.0.1 - Prompt and permissions improvements

What's Changed

- Update context management guidance in compress prompts

- Align compress gating with opencode host permissions

- Standardize dcp-system-reminder XML naming convention

- Update demo readme images

Full Changelog: v3.0.0...v3.0.1

v3.0.0

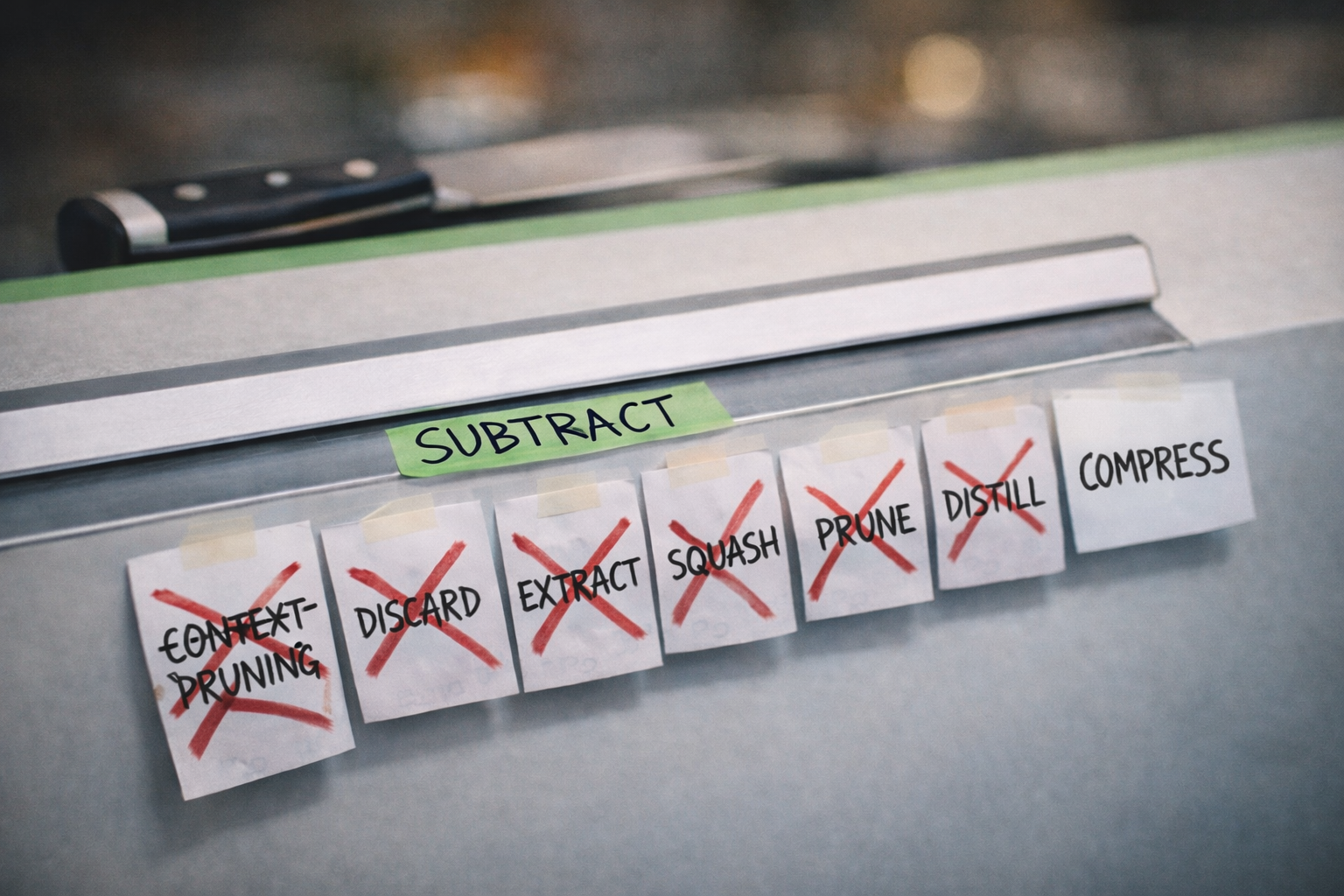

v3.0.0 — Single Compress Tool Architecture

A major release featuring a complete architectural overhaul of the Dynamic Context Pruning system.

Breaking Changes

- The previous 3-tool system (

distill,compress,prune) has been replaced with a singlecompresstool. All context management now flows through one unified interface.

New Features

- Decompress / recompress commands — Built-in commands for managing compression blocks

- Subagent support —

experimental.allowSubAgentsenables compression awareness in sub-agent contexts - Custom prompts —

experimental.customPromptsallows user-defined prompt overrides - Flat schema option —

compress.flatSchemafor simplified tool schema presentation - Protected user messages —

protectUserMessagesprevents user messages from being compressed - Protected tools with glob patterns — Configure which tool outputs are preserved during compression using glob matching

- Visual progress bar — Real-time compression activity indicator

- Configurable turn nudges — Fine-tune when and how compression nudges appear

- Strengthened manual mode — More robust manual compression control

Improvements

- Reduced cache invalidation — Significantly decreases how often DCP causes cache invalidation, fixing Anthropic-related cache issues without needing manual mode

- Infinite conversations — Conversations can now last almost indefinitely; the previous tool pruning approach limited this by leaving user/AI messages untouched

- Simplified model behavior — The model no longer needs to choose between 3 context management tools, reducing decision complexity and improving reliability

Contributors

Migration

This is a major version bump. Users upgrading from v2.x should review the updated README for the new single-tool configuration. The compress tool now handles all context management operations that were previously split across multiple tools.

Full Changelog: v2.1.8...v3.0.0

v2.2.9-beta0 - New config options and context compression improvements

What's Changed

- Added

protectUserMessagesconfig option to prevent user messages from being compressed. - Added

flatSchemaconfig option for simplified tool schema injection. - Added wildcard/glob pattern support for

protectedToolsconfiguration. - Gated

compresstool behind a one-shot manual trigger. - Fixed: insert injected text parts before tool parts in assistant messages.

- Fixed: include

cache.writein system prompt token calculation. - Fixed: strip hallucinated tags from merged sub-agent results.

- Consolidated user and assistant turn nudges into a single prompt.

- Show raw LLM summary in

showCompressionnotification.

Full Changelog: v2.2.8-beta0...v2.2.9-beta0

v2.2.8-beta0 - Compress workflow and prompt/runtime updates

What's Changed

- Added

/dcp decompressand/dcp recompresscommand workflows with better compression status and notification behavior. - Added custom prompt overrides.

- Improved

compressrobustness with graceful fallback handling and fixes for nested compression protected-tool injection.

Full Changelog: v2.2.6-beta0...v2.2.8-beta0

v2.2.6-beta0 - Compression nudge bounds and ID consistency

What's Changed

- Added max/min nudge limits for compress guidance.

- Tightened message-id injection for ignored user messages and first subagent prompt messages.

- Added subagent safety guidance to system prompts.

- Bumped package metadata to 2.2.6-beta0.

Config Changes

- Rename

compress.contextLimit->compress.maxContextLimit. - Rename

compress.modelLimits->compress.modelMaxLimits. - Add optional lower-bound settings:

compress.minContextLimitandcompress.modelMinLimits.

"compress": {

"maxContextLimit": "80%",

"minContextLimit": 30000,

"modelMaxLimits": {

"openai/gpt-5.3-codex": 120000,

"anthropic/claude-sonnet-4.6": "75%"

},

"modelMinLimits": {

"openai/gpt-5.3-codex": 30000,

"anthropic/claude-sonnet-4.6": "20%"

}

}Defaults:

compress.maxContextLimit:100000compress.minContextLimit:30000

Full Changelog: v2.2.5-beta0...v2.2.6-beta0

v2.2.5-beta0 - Subagent context and compression state improvements

What's Changed

- Added experimental subagent support:

"experimental": { "allowSubAgents": true }/undoproperly undoes (?) compressions done in the undone messages- Bumped package metadata to 2.2.5-beta0.

Full Changelog: v2.2.3-beta0...v2.2.5-beta0

v2.1.8 - Version bump

What's Changed

- fix(hooks): append system prompt to last output part

- fix: invert text part rejection logic to target claude models

- chore: add python artifacts to gitignore

Full Changelog: v2.1.7...v2.1.8

v2.2.3-beta0

Beta release 2.2.3-beta0